Your CRM knows the discount policy. It does not know why your best rep ignored it last quarter and still closed the deal.

Every enterprise AI agent starts with the same foundation: rules. Discount thresholds, escalation policies, approval hierarchies, SLA timelines. These are the guardrails that define how the business is supposed to operate. And for most AI deployments today, rules are where the knowledge stops.

The problem is that rules describe the policy. They do not describe what happens when the policy meets reality.

Foundation Capital’s Ashu Garg and Jaya Gupta named this gap in their December 2025 essay, AI’s Trillion-Dollar Opportunity: Context Graphs. Their core insight: enterprise software is very good at recording outcomes. The final price, the escalated ticket, the approved discount. What it does not record is the reasoning behind those outcomes. Which exceptions applied, what precedent mattered, who approved what and why. That context still lives in Slack threads, side conversations, and people’s heads. It has rarely been treated as data.

They call that missing layer decision traces. In a follow-up essay one month later, they sharpened the definition: “A context graph is institutional memory for how an organization makes decisions: not how the process doc says it should, but how it actually works in practice.” The distinction between rules and decision traces may be the most consequential gap in enterprise AI right now.

What rules get you

Rules are essential. No enterprise AI agent should operate without them. They encode the boundaries, policies, and logic that make business processes repeatable and auditable.

A renewal agent with access to rules knows that the standard discount ceiling is 15%, that contracts over $500K require VP approval, and that pricing exceptions need documentation. Those rules are explicit, version-controlled, and available in the system of record.

For straightforward transactions, rules are enough. The invoice matches the PO, the discount falls within policy, the approval chain is clear. The agent acts, the outcome is correct.

But most enterprise decisions are not straightforward.

Where rules fall short

Consider a renewal agent handling a mid-market account. The customer’s support ticket volume spiked 40% over the past quarter. Their NPS score dropped.

The account manager flagged churn risk in a Slack thread three weeks ago and recommended a 20% discount, five points above the standard ceiling. The customer was evaluating a competitor, and losing them would cost more than the margin hit.

The VP approved it verbally in a standup. The discount was applied. The renewal closed. The CRM recorded the outcome: 20% discount, renewed.

What the CRM did not record: the support ticket trend from Zendesk, the churn risk signal from PagerDuty’s usage data, the Slack conversation where the account manager laid out the reasoning, or the verbal approval in the standup that made it legitimate. The rules said 15%. The decision was 20%. And the reasoning that bridged that gap disappeared the moment the deal closed.

Now hand that same account to an AI agent twelve months later. The agent sees the contract, the discount history, and the policy. It does not see the decision trace. So it either enforces the 15% ceiling and risks losing the account, or it sees the precedent of 20% and applies it blindly without understanding the conditions that justified it.

Both outcomes are wrong. One is too rigid. The other is too permissive. Neither reflects how the organization actually makes this decision.

Decision traces are the operating logic your systems never captured

Garg and Gupta make a useful distinction in their essays. Enterprise software captures what happened. Decision traces capture how and why it happened. They are the raw material of organizational memory.

Every time a human navigates an exception, weighs competing signals, or applies judgment that deviates from documented policy, a decision trace is created.

The problem is that most of these traces evaporate. They live in the white space between applications: email chains, team communication threads, cross-functional discussions, and the tribal knowledge that accumulates in people’s heads.

Orchestration platforms are uniquely positioned to capture these traces because they sit in the execution path. They see the full context at decision time: what inputs were gathered across systems, what policy was evaluated, what exception route was invoked, who approved, and what state was written back. When an agent operates through an orchestration layer rather than inside a single application, the decision trace becomes a natural byproduct of the work itself.

Decision traces compound. Rules do not.

Here is the real difference. Rules are static until someone rewrites them. A discount policy stays at 15% until a pricing committee revisits it. An escalation matrix holds until someone updates the documentation.

Decision traces grow through use. Every renewal decision that gets captured, with its full context of signals, exceptions, and outcomes, makes the next decision better informed. Over time, the organization builds a queryable record of how decisions were actually made, not just what the policy said.

This is what turns an AI agent from a policy-enforcement tool into something that reflects how the business actually operates. The agent handling next year’s renewal for that same mid-market account can now see the pattern: churn risk signals from support data, competitive pressure flagged in account conversations, the exception approval path that was used, and the outcome. It can weigh the current situation against demonstrated precedent rather than choosing between blind enforcement and blind repetition.

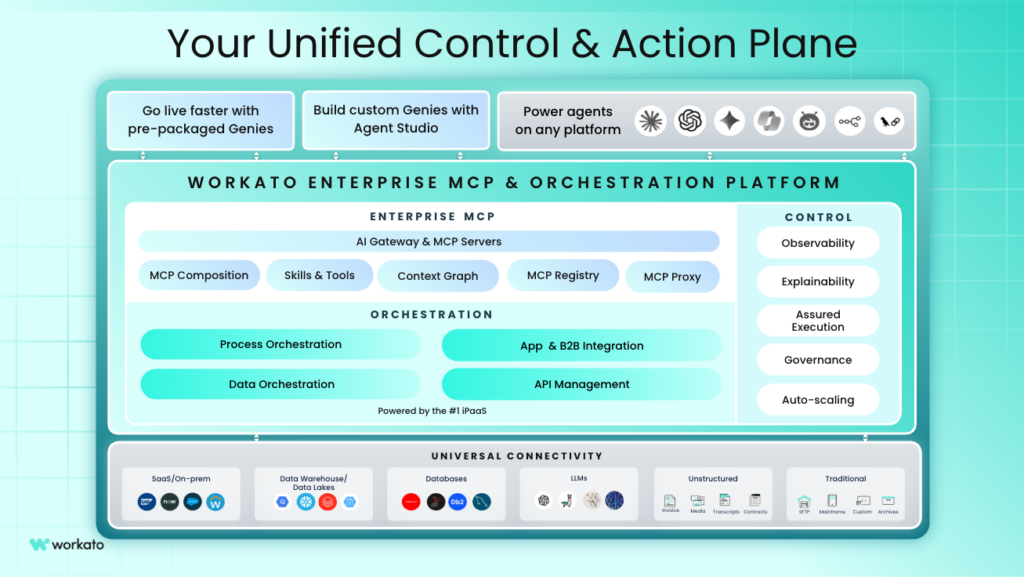

This is exactly what Workato is designed to capture. Because Workato orchestrates work across systems, it sits in the execution path where decision traces are generated. The platform sees the inputs gathered from CRM, support, and communication tools. It sees the policy that was evaluated, the exception that was invoked, and the approval that made it official. Those traces are preserved as structured organizational knowledge, not lost in Slack threads or someone’s memory.

Workato’s Enterprise MCP adds the governed connectivity that makes this practical at scale. Agents operating through the platform inherit the permissions, audit trails, and access controls that enterprise operations require. The decision trace is captured with full context and full governance, which means leadership can trust it as a reliable record of how the business actually operates.

Over time, this compounds. Every decision an agent processes through Workato adds to the organizational knowledge base. The renewal agent handling that mid-market account next year does not start from scratch. It starts with a record of the signals, the judgment, and the outcome from the last time a similar decision was made.

The gap that matters most

Most enterprise AI conversations focus on model capability, connectivity, or governance. Those all matter. But the organizations pulling ahead are the ones investing in the layer beneath all three: the accumulated knowledge of how decisions are actually made.

Rules tell an agent what to do. Decision traces tell it how the organization thinks. The enterprises that capture both will build AI that gets smarter with every decision. The ones that rely on rules alone will keep rebuilding the same agents every time the policy changes.

This article is part of the Enterprise Context Graph series.

Explore the rest of the series:

- The Enterprise Context Graph Explained

- Why Most Enterprise AI Search is Still Surface Level

- The Glue Function Problem: Why RevOps, DevOps, and SecOps Are the AI Opportunity