Most enterprise AI stalls in the same place. Not at the model, not at the data, but in the space between applications where the real work actually happens.

Most enterprise AI projects never reach production. Not because the models are too weak or the tools are too immature. Because the AI was deployed where the data lives, not where the decisions are made.

There is a difference, and it matters.

Foundation Capital’s Ashu Garg named it in his December 2025 essay on context graphs. The most consequential organizational knowledge does not sit inside any single system. It lives in the white space between applications, in what Garg calls “glue functions.” These are the roles responsible for stitching together context that no single system can hold.

- RevOps connects sales, finance, and customer success.

- DevOps coordinates engineering, QA, and infrastructure.

- SecOps triangulates identity, network, and application signals.

These functions make decisions by synthesizing inputs from five or more systems at once. They are also, almost without exception, where enterprise AI runs out of road.

The reason AI keeps stalling is not an model problem. It is an infrastructure problem.

The glue is where the intelligence lives

Three examples. One per function. These are the patterns that show up inside enterprise AI deployments when things do not work the way they were supposed to.

RevOps: The forecast that no system owns

A revenue operations team is investigating why Q1 closed business is running 18% below forecast. They pull from Salesforce for pipeline data, Marketo for lead quality by segment, and their finance system for booked revenue. Each system gives them a clean answer. None of those answers are the same.

The discrepancy exists because the pipeline data in Salesforce reflects rep-level judgment calls on close probability, Marketo’s lead quality score was recalibrated in January, and finance is recognizing revenue on a different schedule than the CRM is recording it. The real story lives in the decisions that connected those systems over the past quarter: the rep who pushed a close date, the ops manager who adjusted scoring, the finance analyst who flagged a booking timing issue in a spreadsheet that never touched any system of record.

An AI tool deployed inside Salesforce sees pipeline. An AI tool deployed inside Marketo sees lead data. Neither sees the decision thread that explains the gap. Without that thread, both can only confirm the discrepancy that is already visible on the dashboard.

DevOps: The release that nobody stopped in time

A DevOps team is coordinating a Thursday production release. Engineering has approved in GitHub. QA has signed off in Jira.

PagerDuty is showing elevated error rates on a dependency service that was updated Tuesday. The Slack thread where the on-call engineer flagged the issue is three levels deep in a channel with 200 other messages.

The release goes out. The error rates spike. Three hours later, someone finds the thread.

The context that would have stopped that release was not in GitHub alone, not in Jira alone, and not in PagerDuty alone. It was in the relationship between those signals, assembled by a human who read all three and connected the dots. An AI that monitors any one of those systems generates noise. An AI that sits between them, in the execution path where work actually flows, generates signal.

SecOps: The alert that means something different in context

A security operations team receives a high-volume alert from Splunk: unusual authentication behavior across 12 accounts. CrowdStrike shows no malware. The accounts belong to a sales team that just returned from a week-long offsite, logging in from hotels, airports, and personal devices across six time zones.

The alert is real. The threat is not. The reason it is not a threat lives outside Splunk and CrowdStrike entirely.

It lives in an HR system showing the offsite dates, a travel tool showing the hotel locations, and a decision made by security leadership three months ago to whitelist conference travel patterns after a similar false positive. That decision was documented in a ServiceNow ticket that was resolved and archived.

An AI triage tool running inside Splunk sees the anomaly and escalates. A human analyst who knows the business context does not. Intelligence is not the gap. Context is.

What these three examples have in common

Each scenario shares the same structure. The AI has access to the data. The AI does not have access to how decisions were made using that data. The context lives between applications, assembled in real time by people whose job is to be the connective tissue.

Garg put it directly: the most consequential enterprise knowledge does not live in systems of record or knowledge bases. It lives in the white space between applications.

RevOps, DevOps, and SecOps are not edge cases. They are where enterprise decisions are made. And most enterprise AI was built to look down into individual systems, not to sit between them.

That architectural choice is why pilots stall when they try to reach production. The data is there. The decisions that make the data meaningful are not.

Sitting between systems is a design decision

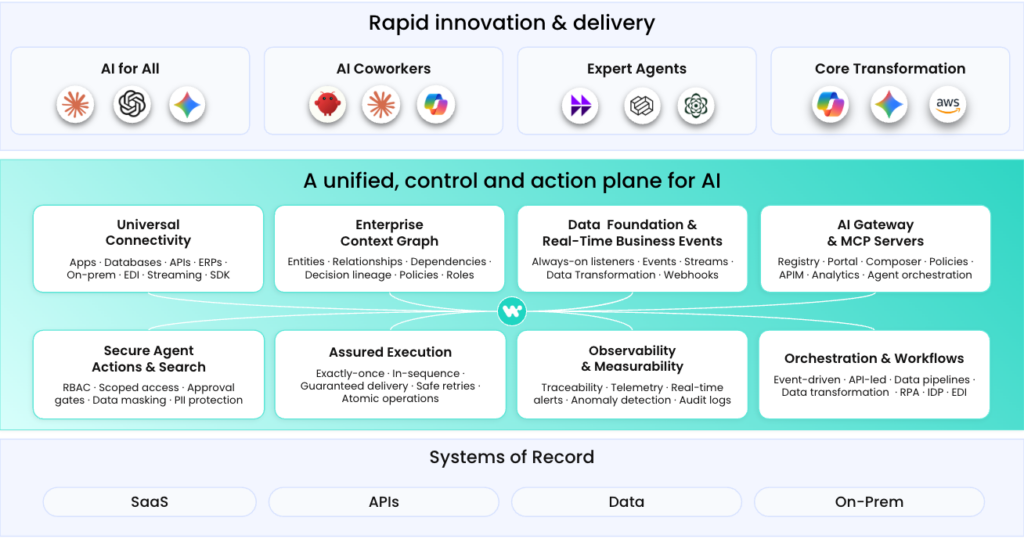

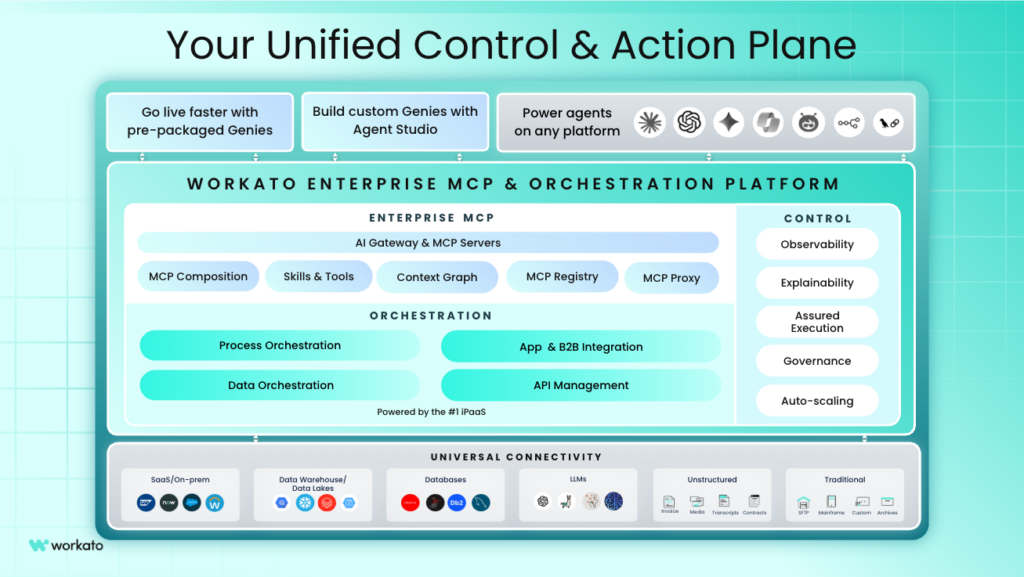

This is where Workato changes the equation. Because Workato orchestrates work across systems rather than inside any single one, it sits in the execution path where glue function decisions are made. When a RevOps workflow runs through the platform, it sees the Salesforce input, the Marketo signal, and the finance flag alongside the cross-system decision that connected them. Those traces are preserved as structured organizational knowledge, not lost in a thread.

Enterprise MCP provides the governed connectivity that makes this practical at enterprise scale. An agent operating through Workato inherits permissions across systems, sees the full decision context at the moment work happens, and acts within the governance guardrails that security and compliance teams require. The context is not reconstructed after the fact. It is captured where decisions are generated.

This is what makes glue functions the right starting point for enterprise AI. The people doing RevOps, DevOps, and SecOps work are already assembling cross-system context. They do it manually, at the cost of their most experienced people’s time. A platform built to sit between applications amplifies that work rather than trying to replace it from inside a single system.

This is why your AI keeps stalling

Three different functions. Three different tool stacks. The same problem in every one: the AI was inside the application when the intelligence was between them.

RevOps context disappears into a spreadsheet nobody linked. DevOps context disappears into a Slack thread nobody searched in time. SecOps context disappears into a resolved ticket nobody connected to the current alert. The pattern is consistent. The cause is architectural.

Most enterprise AI tools connect to your systems. Workato sits between them, it captures decision traces where they are actually generated, in the execution path across systems, not reconstructed after the fact from documents. Enterprise MCP governs every action agents take across that path, so the context captured is not just accurate. It is trustworthy enough to act on.

The enterprises pulling ahead on AI are not the ones with the best models or the most tools. They are the ones that gave AI a place to stand between systems, where the glue functions have always operated. That is the architectural decision that determines whether AI reaches the intelligence that actually runs your business, or stops at the edge of each application and asks a human to fill the gap.

The gap is real. It has a name now. And it has a fix.

This article is part of the Enterprise Context Graph series. Explore the rest of the series:

- The Enterprise Context Graph Explained

- Rules vs. Decision Traces: What Your AI Actually Needs to Know

- Why Most Enterprise AI Search is Still Surface Level