Even if by now, you are probably sick and tired of articles, social network posts, webinars and conference presentations about AI agents, we felt compelled to contribute this article in hopes that it would rise above the noise, because there is an issue a lot of AI leaders and practitioners are still not paying enough attention to: that is, the challenge of making AI agents, traditional application systems, data and human tasks to work together. For simplicity we call it the AI agent orchestration challenge.

Orchestration is the missing piece.

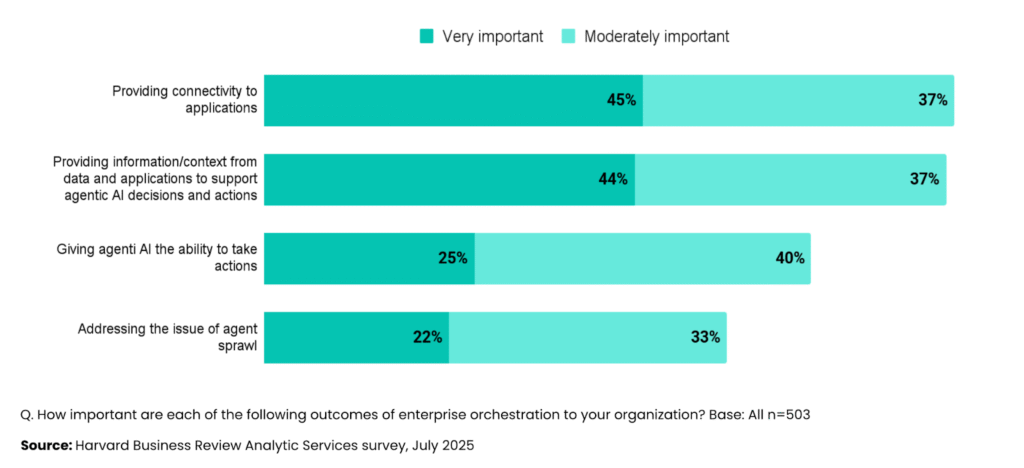

A recent Harvard Business Review survey revealed something striking: 82% of organizations recognize that providing connectivity to applications is important for their AI agent initiatives, and 80% understand the need for providing information from data and applications.

Importance of Application and Data Integration for AI Agents

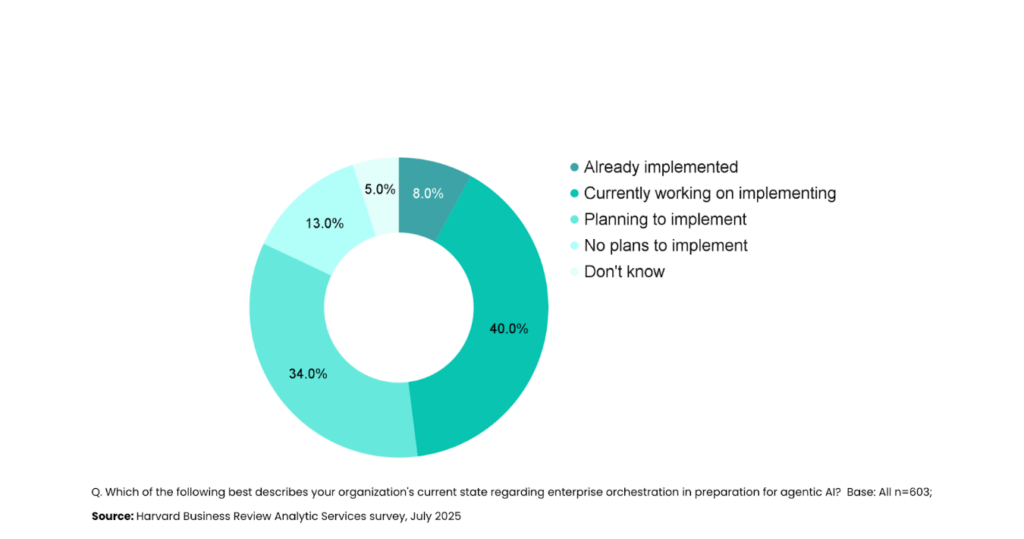

Yet only 5% have actually implemented orchestration strategies to support their AI agents. Another 74% are scrambling to figure it out.

If you’re investing in AI agents but haven’t addressed how they’ll work with your existing systems, data, and processes, you’re building on quicksand.

AI Agents Won’t Work in a Vacuum, They Need Orchestration

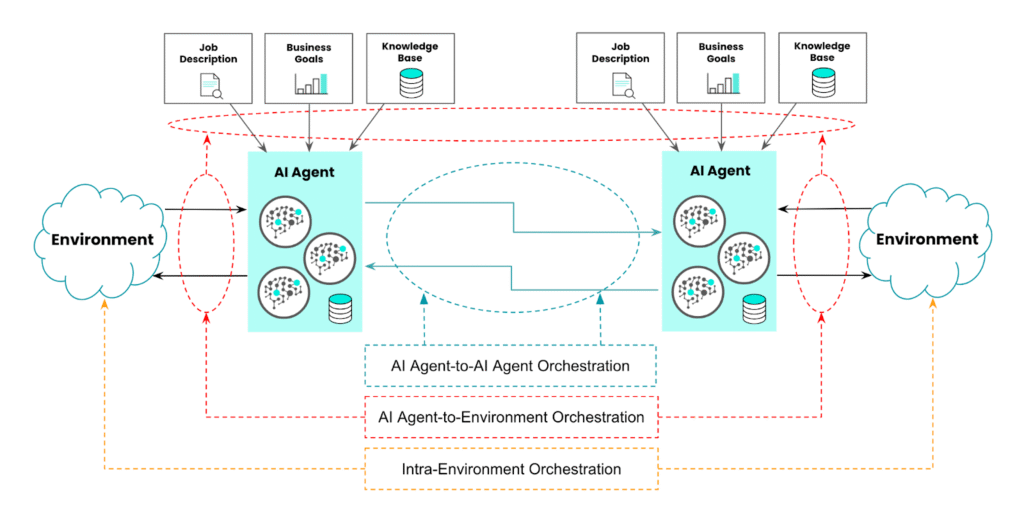

AI agents don’t operate in isolation. They operate in an environment—your organization’s data, applications, documents, processes, partners, devices and people. A real agent senses events happening in your environment, decides on the best course of action based on business context, and performs those actions to achieve a goal.

Consider an AI agent that can execute on sales processes like fulfilling customer purchase orders. It receives an order event, but then needs to check inventory levels in your warehouse system, verify payment history in your financial system, assess satisfaction scores in your CRM, and coordinate with logistics. Only after evaluating all these factors can it issue an invoice and fulfill the order—or send a personalized explanation if conditions aren’t met.

Every one of those interactions requires orchestration. Without it, your AI agent is just an expensive chatbot.

Therefore, a key aspect of any AI agent implementation is defining the actions the AI agent itself can take. For example:

- The data and business applications it can access.

- The business processes it can trigger.

- The human approvals and sign-off it requires.

- The associated authorization and authentication rules.

These actions effectively define what the AI agent itself is capable of doing. They are often called AI agent “skills”.

In response to a certain event the AI agent makes decisions to activate skills based on its:

- Job Description: human provided instructions about what the AI agent can and cannot do

- Knowledge Base: information about the specific scenario the AI agent operates in (e.g., your company financial department) in terms of policies, procedures, constraints, rules etc.

- Business Goal: for example, maximize profitability, optimize customer satisfaction, minimize stock level, etc.

- Memory: a “log” of the AI agent’s previous activity in terms of scenarios, processed events, taken actions, outcomes, failures and other contextual details.

Once it has taken the decisions (that is, it has devised “the best course of action”), the AI agent, autonomously or semi-authonomously (that is, with humans in the loop), triggers, in the defined sequence, the relevant skills, thus determining an impact on the environment.

The diagram below schematically describes how all these moving parts play together.

Anatomy Of An AI agent

Bottom Line: The ability for AI agents to interact with their environment (that is, to receive events from and perform actions on the environment), is a critical requirement for success.

The Multi-Agent Reality

As AI agent sprawl becomes evident with every team and vendor offering their version of an AI assistant, the challenge multiplies with multi-agent systems. Individual AI agents are limited in their knowledge base, context window size, and available skills. Multi-agent systems overcome these limitations through specialized collaboration—a customer support agent handing off to a finance agent for billing issues, or supply chain agents coordinating across procurement, logistics, and finance.

However, to collaborate, AI agents need to discover each other’s capabilities, activate each other, and exchange information seamlessly.

Three Orchestration Challenges You Can’t Ignore

Your AI orchestration strategy needs to address three distinct challenges:

- Agent-to-Environment (how agents receive events, access data, and trigger actions through APIs, event streams, and file exchanges),

- Agent-to-Agent (enabling multiple AI agents to discover their respective capabilities and collaborate), and

- Intra-Environment (building “composite” skills that orchestrate multiple elementary actions across systems).

AI Agent Orchestration Challenges

AI Agent Standards Are Emerging (But Immature)

Standards like Model Context Protocol (MCP) and Agent-to-Agent (A2A) protocols are unlocking connectivity and interoperability for AI agents. MCP has gained significant traction for connecting AI agents to their environment. A2A is generating industry buzz for agent collaboration. However, there are several other AI agent orchestration protocols proposed by vendors, industry consortia, academia and even individuals. A non-exhaustive list includes:

- AI agent-to-AI agents Orchestration Protocols:

- Agent 2 Agent (A2A) Protocol – Linux Software Foundation

- Agent Connect Protocol (ACP) – AGNTCY/Linux Software Foundation

- Agent Interaction & Transaction Protocol (AITP) – NEAR AI

- Agent Network Protocol (ANP) – Geowei Chang, Hangzhou Vector Consensus Technology Co.,

- Networked Agents And Decentralized AI (NANDA) – MIT

- AI agent-to-Environment Protocols:

- agents.json – Wild-card.ai

- Model Context Protocol (MCP) – Anthropic donated to The Linux Foundation, now Agentic AI Foundation (AAIF)

- Universal Tool Calling Protocol (UTCP) – UTCP Open Source Community

The reality check: these protocols are nascent. MCP has published four versions in one year—vendors struggle to keep pace. There’s no official certification, so you can’t be sure two “MCP-compliant” implementations will work together without testing. Security, scalability, and multi-tenancy support remain limited.

Yet organizations that embrace these standards early are gaining advantages: establishing common architectural frameworks, reducing vendor lock-in, and lowering implementation costs. The standards may be imperfect, but they’re directionally correct—and waiting for perfection means falling behind.

What Successful Orchestration Looks Like

Organizations that get AI agent orchestration right share three characteristics. They think strategically, defining a unified technology stack and clear delivery model rather than letting every AI project adopt its own tools. This prevents the nightmare of AI sprawl creating hundreds of expensive overlapping systems, thousands of ungoverned interfaces, and skyrocketing technical debt.

They appoint an orchestration czar who owns the strategy—both conceptually and operationally—with resources to implement the platform and accountability for business and technical outcomes. Often, this role goes to someone who already leads automation, integration, or API strategy, bringing established expertise to the AI domain.

And they proceed incrementally, building project-by-project to maintain resilience against technology shifts. The technology foundation for orchestration—APIs, event-driven architectures—is mature. But AI-specific capabilities like LLM routing and AI gateways remain immature and fast-evolving. Smart organizations include risk mitigation measures and review their strategy periodically.

The Cost of Waiting to Invest in Agentic Orchestration

When organizations deploy AI agents without orchestration, they work beautifully in demos and impress stakeholders in pilots. Then they fail to scale because no one can figure out how to connect them to the fifty other systems they need. Teams build one-off integrations. Technical debt accumulates. Costs spiral. The board starts asking uncomfortable questions about AI ROI. So, who are the organizations seeing 10-36% higher returns from their AI investments? They are the ones who solved orchestration early. They understood that AI agents without orchestration are like brilliant executives without assistants, phones, or email—isolated from the information and systems they need to drive real business impact.

The question isn’t whether you need orchestration. It’s whether you’ll address it strategically now, or scramble to fix it later when your agents can’t scale beyond proof-of-concept. For more on this topic and guidance on how to Scale AI Agents with orchestration, access my full research paper here.