Enterprises are moving quickly to deploy AI agents, yet trust remains the biggest barrier to putting them into production. A recent Workato and Harvard Business Review study highlights this gap clearly. While 84 percent of leaders believe agentic AI will transform their business, only 6 percent fully trust agents to run core processes autonomously.

The traits that make agents powerful also create a new class of risk. Unlike traditional automation, agents operate as digital insiders. They can initiate transactions, access enterprise systems, and trigger downstream workflows with real business impact. That autonomy expands the enterprise attack surface and introduces failure modes that existing security and governance models were never designed to handle.

Why Trust Is the Core Challenge

The traits that make agents powerful also create a new class of risk. Unlike copilots or traditional automation, agents operate as digital insiders. They can initiate transactions, access enterprise systems, and trigger downstream workflows with real business impact. That autonomy expands the enterprise attack surface and introduces failure modes that traditional security and governance models were never designed to handle.

When agents act without clear identity, permission boundaries, or oversight, even small mistakes can cascade across connected systems. A single error can propagate through finance, operations, customer experience, or compliance workflows in seconds. This is why trust becomes the deciding factor in whether agentic AI can move beyond experimentation.

The Enterprise Trust Problem

Most AI agents today connect to enterprise systems using a single set of credentials with broad, unrestricted access. On paper, this simplifies deployment, but in practice it creates significant risk. These trust gaps show up in how organizations are adopting agents in real scenarios. In the HBR trust gap study, 43 percent of respondents said they trust AI only for limited or routine tasks, and 39 percent restrict agents to supervised or non-core processes. This cautious stance underscores how far enterprises are from letting agents act independently in high-risk workflows

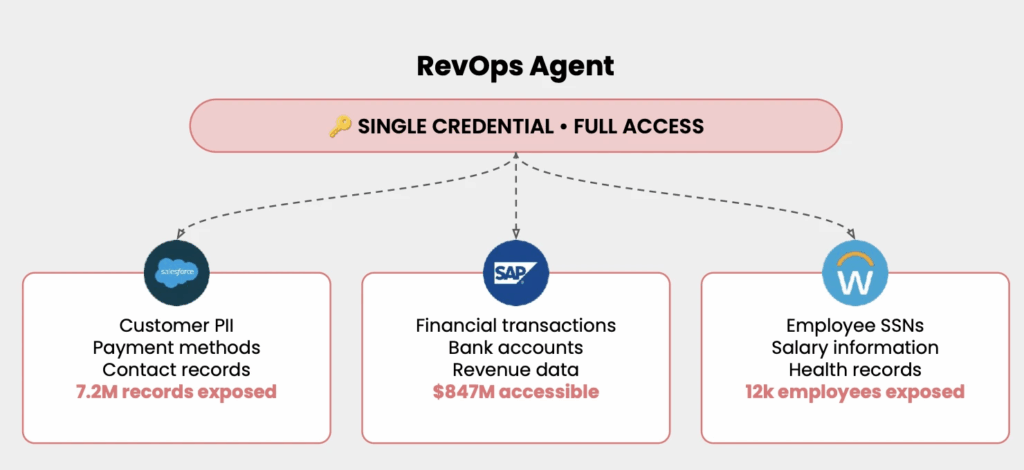

Consider a typical RevOps agent. To be useful, it needs access to customer data, financial systems, and employee information. Without proper identity inheritance and permission boundaries, that agent often operates with full access across every connected system. One credential becomes a master key.

As the diagram illustrates, a single agent credential can expose millions of customer records in CRM systems, unrestricted access to financial transactions and revenue data in ERP platforms, and sensitive employee information such as salaries and health records in HR systems. What was intended to automate routine revenue operations now has the ability to touch the most sensitive data across the enterprise.

This is not a hypothetical scenario. It is how many agents are deployed today.

The risk is not just data exposure. It is loss of control. When agents operate with broad credentials, enterprises cannot enforce least-privilege access, cannot confidently audit who did what, and cannot explain why a specific action occurred. A mistake, misconfiguration, or compromised agent can ripple across systems in seconds, affecting customers, employees, and financial reporting at once.

This is why trust breaks down so quickly once agents move beyond narrow pilots. Leaders are not unwilling to automate. They are unwilling to grant unrestricted access to systems that were never designed for autonomous actors. Without a security model that treats agents as first-class identities with defined roles, permissions, and oversight, autonomy becomes a liability instead of an advantage.

Why Agents Need an Enterprise Security Model

Agentic AI requires a fundamentally different security approach than traditional automation. Agents are dynamic and generative. They reason, adapt, and act across systems rather than following predefined scripts. This demands security that evaluates identity, permissions, and intent in real time, not static credential checks applied after the fact.

Enterprise leaders consistently cite inconsistent access controls, lack of auditability, and weak policy enforcement as the biggest blockers to scaling AI. The issue is not whether agents can act. It is whether they should act. Without a security and governance model designed for autonomous systems, organizations cannot safely deploy agents in high stakes workflows.

What Enterprise Trust Requires

Trust in agentic AI requires governance that is built into the execution layer itself. This includes:

- Role based access controls aligned to enterprise identity

- Permission aware access to systems and data

- Real time enforcement of policies and rules

- Automatic data masking and PII protection

- Full audit trails and traceability for every action

- Continuous monitoring and anomaly detection

- Support for compliance frameworks such as SOC2, PCI, ISO, and GDPR

This foundation allows agents to operate with autonomy while maintaining enterprise grade control. It also gives security, risk, and compliance teams the visibility they need to approve agentic use cases with confidence.

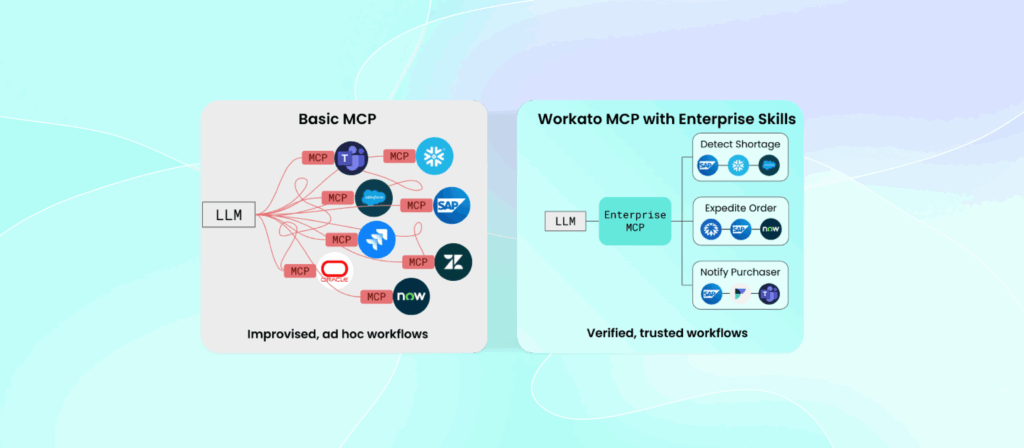

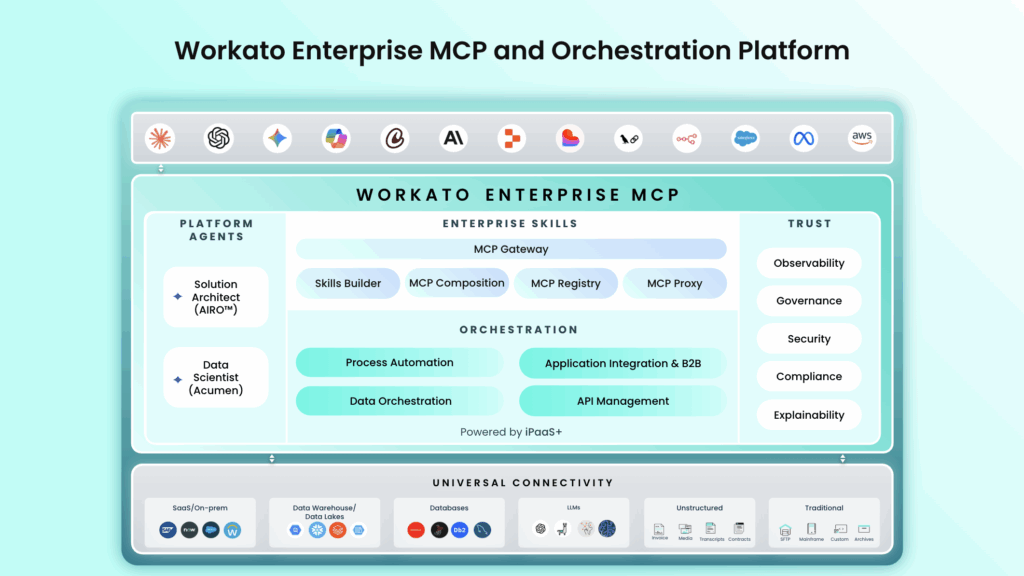

How Enterprise MCP Closes the Trust Gap

Enterprise MCP provides a governed architecture that mediates every agent interaction with enterprise systems. Instead of allowing agents to connect directly to applications and data sources, all actions are authenticated, authorized, and evaluated against defined policies before they execute.

Key capabilities include identity inheritance, permission aware execution, runtime policy enforcement, data protection controls, and complete observability. Every agent action is logged, traceable, and explainable. If an agent attempts to act outside approved boundaries, the system blocks it immediately.

This approach replaces over permissioned, opaque agent behavior with controlled, accountable execution. It allows organizations to scale agentic AI while maintaining the trust required for production use.

A Practical Example

Consider an agent tasked with accessing and updating customer information across multiple systems. In many environments, that agent would operate using broad credentials, exposing far more data than necessary and leaving little visibility into what actions were taken.

With a governed execution layer in place, the interaction looks very different. When the agent attempts to access customer information, the platform automatically verifies whether the underlying user or role has permission to view that data. Sensitive fields, such as social security numbers or other regulated information, are masked by default. Every action is logged, creating a complete and auditable record of what the agent accessed, changed, or attempted to change.

Security teams gain full visibility into agent behavior without slowing down operations. At the same time, agents only see and act on the data they are explicitly allowed to access. The result is automation that is fast, compliant, and transparent, even when agents operate autonomously across systems.

The Takeaway

Trust determines whether agentic AI becomes a core enterprise capability or remains a collection of isolated pilots. Organizations want the benefits of autonomous agents, but they need guardrails that make autonomy safe. Research across McKinsey, MIT, and industry studies points to the same conclusion. Trust must be embedded into the architecture, not added as an afterthought.

A governed foundation enables enterprises to move beyond experimentation and deploy agents in the workflows that matter most. When trust is built in, agentic AI can finally deliver on its promise at scale