Enterprise leaders are moving quickly to adopt AI, enterprise search, agentic tools, and deeper orchestration capabilities. The goal is clear: increase productivity, modernize operations, and enable employees to work more intelligently. The challenge is that most organizations are deploying these technologies in fragments, which slows progress and increases risk.

Gartner predicts that more than 40 percent of agentic AI initiatives will be canceled by 2027 due to poor governance, fragmented architectures, and lack of operational readiness. The tools continue to evolve, but the architecture beneath them has not kept pace.

Enterprises are not using too many AI tools. They are using them without a common infrastructure layer underneath. To scale AI safely and reliably, organizations need one enterprise MCP platform that connects, orchestrates, and governs everything else.

The Cost of a Fragmented AI Architecture

As AI adoption accelerates, most enterprises end up with dozens of disconnected capabilities. Copilots live inside SaaS applications, search tools live separately, agents run as stand-alone services, and workflow platforms operate independently. This creates structural friction that slows progress and increases risk.

Technical complexity

Each new AI tool or assistant requires its own integration points. Over time, teams accumulate custom logic and brittle connections that are difficult to maintain and even harder to scale.

Higher governance and security risk

Every tool comes with its own permission model, audit approach, and access rules. It becomes difficult to establish consistent policies or validate what AI systems can see or execute across core applications.

Innovation blockers

Without shared context, AI cannot access the workflows, data, and systems required to execute meaningful work. This limits experimentation and prevents AI initiatives from moving beyond isolated pilots.

Fragmented employee experience

Employees switch between multiple interfaces to search for information, trigger workflows, or interact with AI. The inconsistency slows adoption and reduces productivity.

These issues stem not from the tools themselves, but from the lack of a unified platform to support them

The Case for Centralized AI Infrastructure

Centralizing AI infrastructure does not mean standardizing on a fixed set of tools. It means standardizing the layer beneath them. Enterprises will continue to adopt new models, copilots, agents, and AI services as the market evolves. What must remain consistent is how those tools connect to enterprise data, workflows, and policies.

A centralized AI infrastructure layer gives organizations full flexibility to choose whatever AI tools make sense today and tomorrow, while providing a shared foundation for security, governance, accuracy, and observability. This is how enterprises avoid locking themselves into brittle architectures as their AI stack grows.

Freedom to choose, without fragmentation

Teams can use the AI tools they prefer, including Claude, OpenAI, Gemini, vendor copilots, Slack assistants, and workflow platforms. The difference is that these tools are no longer integrated ad hoc. They are connected through a common infrastructure layer that standardizes access, context, and execution.

This allows enterprises to adopt new tools quickly without rebuilding integrations or redefining governance every time the stack changes.

Centralized orchestration and integration

A centralized AI infrastructure layer ensures that every AI system inherits the same enterprise context, access controls, workflows, policies, and audit visibility. Orchestration logic lives in one place, rather than being duplicated across tools.

As a result, AI initiatives scale without increasing complexity, and changes can be made centrally without disrupting downstream systems.

A foundation that evolves with your AI strategy

AI ecosystems are not static. New models emerge, agents become more capable, and use cases expand. A centralized infrastructure layer absorbs that change. It allows enterprises to evolve their AI strategy continuously while maintaining consistent control, trust, and accuracy.

This is what enables AI to grow with the business instead of becoming another source of technical debt.

Why This Matters in the Age of Agentic AI

AI systems are beginning to take action, not just generate content. They search knowledge, make decisions, and initiate workflows. To operate safely, they need capabilities that point solutions cannot provide on their own.

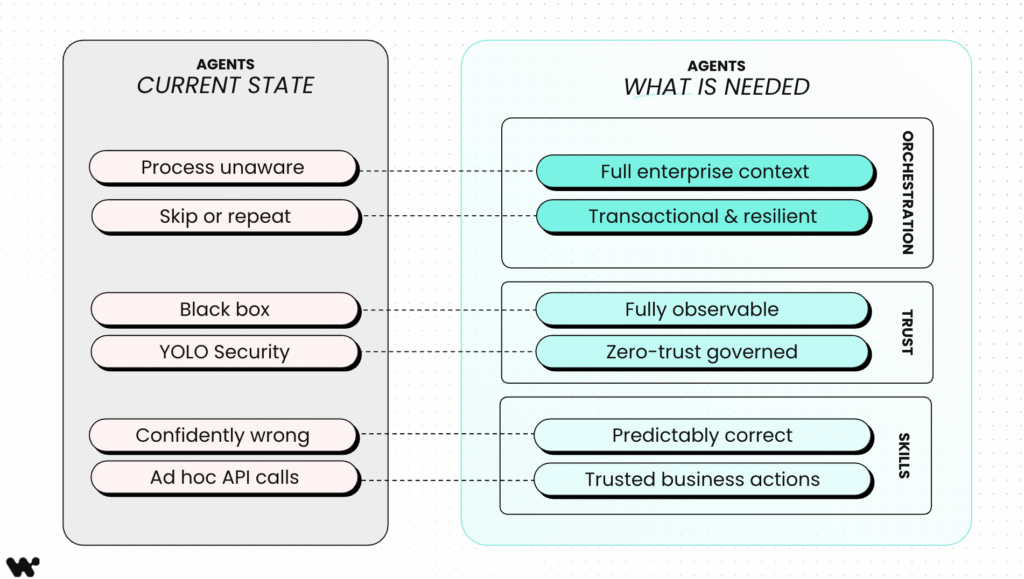

Today’s agents fail not because they lack intelligence, but because they lack enterprise foundations. Without shared context, orchestration, trust, and execution capabilities, agents remain unreliable, opaque, and unsafe to scale.

The gap is architectural. Most agents today operate without awareness of enterprise processes, without resilience, and without governance. What agents need is not another model or tool, but an infrastructure layer that provides orchestration, trust, and enterprise-grade execution.

1. Orchestrated context

AI needs unified access to enterprise data, historical activity, and real time signals. A single context layer enables AI to understand situations and act consistently.

2. Trust and security

AI must operate within enterprise policies. A unified platform provides identity inheritance, verified actions, permission aware search, and complete auditability so every action is safe and governed.

3. Accuracy

AI must complete business actions reliably. Enterprise skills ensure that each action is validated, predictable, and aligned with business rules.

These three pillars allow AI to move from isolated pilots into production environments with confidence.

Why Workato

Workato Enterprise MCP delivers the context, trust, and accuracy AI agents need to act across your enterprise, and it is built on the #1 iPaaS.

Enterprise scale

One runtime, one builder experience, and one governance model across integration, automation, search, and AI agents. Workato has been recognized by Gartner for strength in AI enablement and platform vision.

Enterprise MCP

Enterprise MCP is the trust, context, and orchestration layer that governs all AI activity. It unifies identity, security, workflows, verified actions, and enterprise skills so AI can act responsibly across systems.

Complete platform for AI and automation

Workato brings together Orchestrate for workflows, GO for enterprise search and Deep Action, and Agent Studio for building, deploying, and supervising AI agents. These capabilities share the same context engine and governance model.

Full Flexibility, Centralized Control

Workato does not replace best of breed tools. It gives enterprises the freedom to adopt any AI models, agents, and applications while centralizing security, governance, and orchestration in one unified platform..

The New Standard for Enterprise AI

AI will continue to grow more distributed. Every SaaS application will have its own copilot, departments will build custom agents, and models will improve rapidly. The challenge is not choosing the right tools today, but creating an architecture that can support whatever comes next.

The solution is not fewer tools, and it is not locking into early winners. The solution is a centralized AI infrastructure layer that provides full flexibility at the edge and centralized control at the core.

By anchoring AI to an enterprise MCP, organizations gain consistent governance, reliable orchestration, shared context, and predictable outcomes, while retaining the freedom to adopt new models, agents, and copilots as the ecosystem evolves. Security, accuracy, observability, and trust remain constant, even as the tools change.

This is what full flexibility with centralized control looks like.

This is the foundation that allows AI to scale safely across the enterprise.

Workato provides that infrastructure layer.