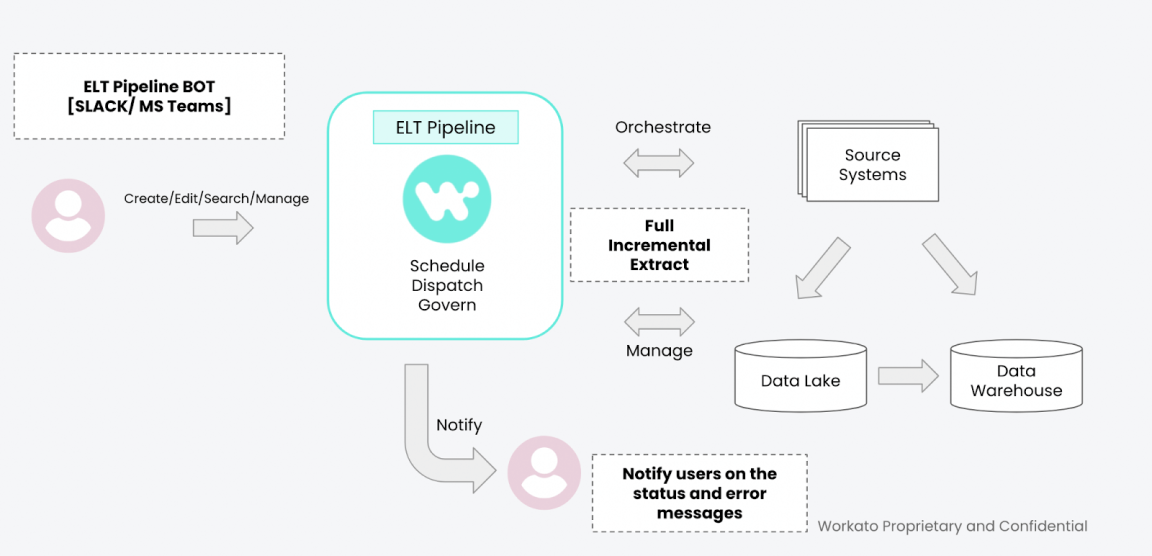

Modern enterprises are rapidly moving more and more of their data management efforts to the cloud. Snowflake and S3 have emerged as one of the leading technologies in Data Lake and Data Warehouse for ELT use cases. In order to effectively ingest data from the various applications and data stores across an enterprise, an ELT pipeline is needed. ELT pipelines create consistency across your environment ensuring that your data services remain simple to support and quickly address issues if they arise.

The ELT Pipeline for Snowflake Accelerator comes with a set of design patterns, prebuilt recipes, best practices, and end-to-end architecture to enable a standardized data pipeline for ingesting data from a diverse set of sources including files, databases, on-prem applications, and SaaS applications.

Any feedback on the accelerator can be shared on accelerators.feedback@workato.com

Features include:

- Data Pipeline Bot UI Wizard

- ELT Scheduler

- Job Dispatcher

- Alerts

- Monitoring

- Bulk/Streaming Connectors

- Pre Built Recipes

- TImestamp based Change Data Capture